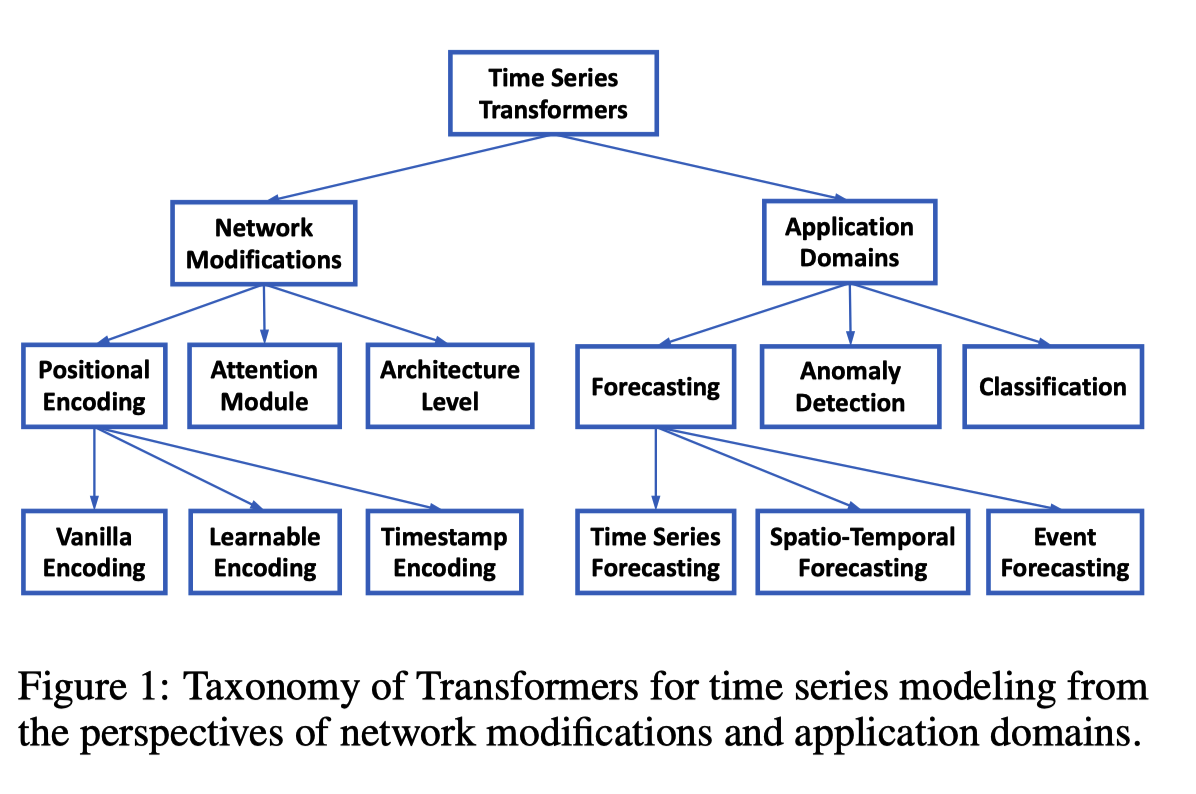

@Transformers in Time Series: A Survey

@Informer: Beyond Efficient Transformer for Long Sequence Time-Series Forecasting

[[Abstract]]

[[Attachments]]

Long sequence [[Time Series Forecasting]] (LSTF)

LSTF 中使用 [[Transformer]] 需要解决的问题 #card

- self-attention 计算复杂度 $O(L^2)$

- 多层 encoder/decoder 结构内存增长

- dynamic decoding 方式预测耗时长

网络结构

ProbSparse self-attention

- 替换 inner product self-attention

- [[Sparse Transformer]] 结合行输入和列输出

- [[LogSparse Transformer]] cyclical pattern

- [[Reformer]] locally-sensitive hashing(LSH) self-attention

- [[Linformer]]

- [[Transformer-XL]] [[Compressive Transformer]] use auxiliary hidden states to capture long-range dependency

- [[Longformer]]

- 其他优化 self-attention 工作存在的问题

- 缺少理论分析

- 对于 multi-head self-attention 每个 head 都采用相同的优化策略

- self-attention 点积结果服从 long tail distribution

- 较少点积对贡献绝大部分的注意力得分

- 现实含义:序列中某个元素一般只会和少数几个元素具有较高的相似性/关联性

- 第 i 个 query 用 $q_i$ 表示

- $\mathcal{A}\left(q_i, K, V\right)=\sum_j \frac{f\left(q_i, k_j\right)}{\sum_l f\left(q_i, k_l\right)} v_j=\mathbb{E}_{p\left(k_j \mid q_i\right)}\left[v_j\right]$

- $p\left(k_j \mid q_i\right)=\frac{k\left(q_i, k_j\right)}{\sum_l f\left(q_i, k_l\right)}$

- $k\left(q_i, k_j\right)=\exp \left(\frac{q_i k_j^T}{\sqrt{d}}\right)$

- query 稀疏性判断方法

- $p(k_j|q_j)$ 和[[均匀分布]] q 的 [[KL Divergence]]

- q 是均分分布,相等于每个 key 的概率都是 $\frac{1}{L}$

- 如果 query 得到的分布类似于均匀分布,每个概率值都趋近于 $\frac{1}{L}$,值很小,这样的 query 不会提供什么价值。

- p 和 q 分布差异越大的结果越是我们需要的 query

- p 和 q 的顺序和论文中的不同 ((9bc63e03-0f2f-4639-b481-5d7925ba8858))

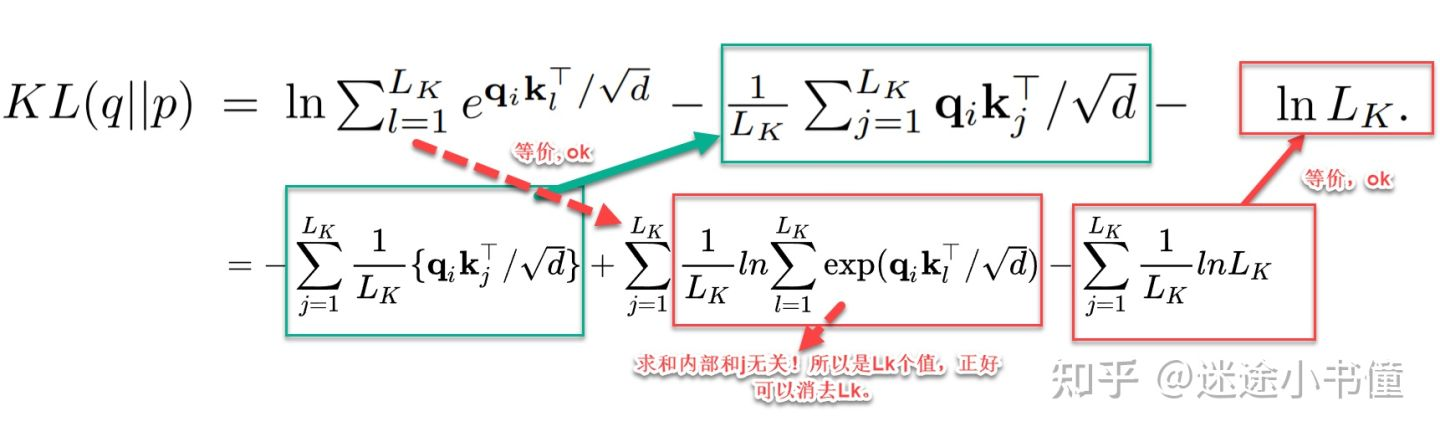

- $K L(q | p)=\ln \sum_{l=1}^{L_k} e^{q_i k_l^T / \sqrt{d}}-\frac{1}{L_k} \sum_{j=1}^L q_i k_j^T / \sqrt{d}-\ln L_k$

- 把公式代入,然后化解

- 把公式代入,然后化解

- $M\left(q_i, K\right)=\ln \sum_{l=1}^{L_k} e^{q_i k_l^T / \sqrt{d}}-\frac{1}{L_k} \sum_{j=1}^{L_k} q_i k_j^T / \sqrt{d}$

- 第一项是经典难题 log-sum-exp(LSE) 问题

- 稀疏性度量 $M\left(q_i, K\right)$

- $\ln L \leq M\left(q_i, K\right) \leq \max j\left{\frac{q_i k_j^T}{\sqrt{d}}\right}-\frac{1}{L} \sum{j=1}^L\left{\frac{q_i k_j^T}{\sqrt{d}}\right}+\ln L$

- LSE 项用最大值来替代,即用和当前 qi 最近的 kj,所以才有下面取 top N 操作

- $\bar{M}\left(\mathbf{q}_i, \mathbf{K}\right)=\max _j\left{\frac{\mathbf{q}_i \mathbf{k}j^{\top}}{\sqrt{d}}\right}-\frac{1}{L_K} \sum{j=1}^{L_K} \frac{\mathbf{q}_i \mathbf{k}_j^{\top}}{\sqrt{d}}$

- $\mathcal{A}(\mathbf{Q}, \mathbf{K}, \mathbf{V})=\operatorname{Softmax}\left(\frac{\overline{\mathbf{Q}} \mathbf{K}^{\top}}{\sqrt{d}}\right) \mathbf{V}$

- $\bar{Q}$ 是稀疏矩阵,前 u 个有值

- 具体流程

- 为每个 query 都随机采样 N 个 key,默认值是 5lnL

- 利用点积结果服从长尾分布的假设,计算每个 query 稀疏性得分时,只需要和采样出的部分 key 计算

- 计算每个 query 的稀疏性得分

- 选择稀疏性分数最高的 N 个 query,N 默认值是 5lnL

- 只计算 N 个 query 和所有 key 的点积结果,进而得到 attention 结果

- 其余 L-N 个 query 不计算,直接将 self-attention 层输入取均值(mean(V))作为输出

- 除了选中的 N 个query index 对应位置上的输出不同,其他 L-N 个 embedding 都是相同的。所以新的结果存在一部分冗余信息,也是下一步可以使用 maxpooling 的原因

- 保证每个 ProbSparse self-attention 层的输入和输出序列长度都是 L

- 为每个 query 都随机采样 N 个 key,默认值是 5lnL

- 将时间和空间复杂度降为 $$O(L_K \log L_Q)$$

- 如何解决 ((6326d1c6-fce8-42c5-a1db-57f7ce74e6ca)) 现象?

- 每个 query 随机采样 key 这一步每个 head 的采样结果是相同的

- 每一层 self-attention 都会先对 QKV 做线性转换,序列中同一个位置不同 head 对应的 query、key 向量不同

- 最终每个 head 中得到的 N 个稀疏性最高的 query 也是不同的,相当于每个 head 都采取不同的优化策略

- $p(k_j|q_j)$ 和[[均匀分布]] q 的 [[KL Divergence]]

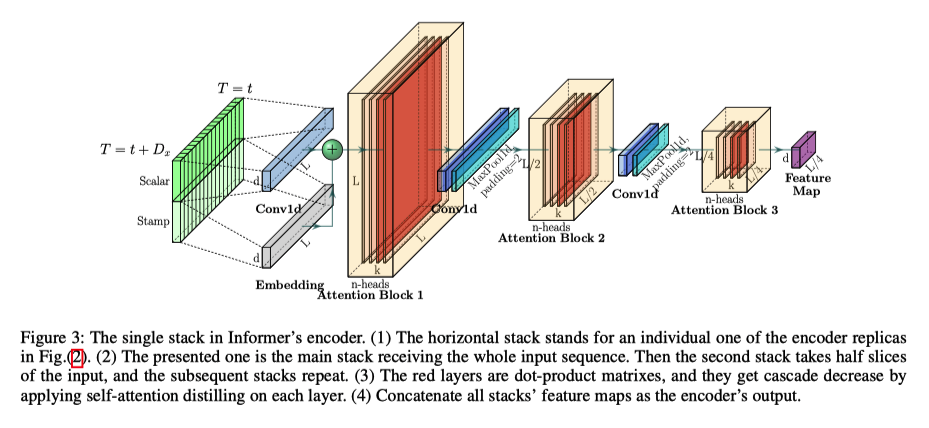

Self-attention distilling

- 突出 dominating score,缩短每一层输入的长度,降低空间复杂度到 $\mathcal{O}((2-\epsilon) L \log L)$

- encoder 层数加深,序列中每个位置的输出已经包含序列中其他元素的信息,所以可以缩短输入序列的长度

- 过 attention 层后,大部分位置值相同

- 激活函数 [[ELU]]

- 通过 Conv1d + max-pooling layer 缩短序列长度

1 | class ConvLayer(nn.Module): |

Generative style decoder

- 预测阶段通过一次前向得到全部预测结果,避免 dynamic decoding

- 不论训练还是预测,Decoder 的输入序列分成两部分 $X_{feed dcoder} = concat(X_{token}, X_{placeholder})$

- 预测时间点前一段已知序列作为 start token

- 待预测序列的 placeholder 序列

- 经过 deocder 后,每个 placeholder 都有一个向量,然后输入到一个全链接层得到预测结果

- 为什么用 generative style decoder #card

- 解码器能捕捉任意位置输出和长序列依赖关系

- 避免累积误差

Experiment

Input representation

See Also

Ref

[[Abstract]]

- Advertising and feed ranking are essential to many Internet companies such as Facebook and Sina Weibo. Among many real-world advertising and feed ranking systems, click through rate (CTR) prediction plays a central role. There are many proposed models in this field such as logistic regression, tree based models, factorization machine based models and deep learning based CTR models. However, many current works calculate the feature interactions in a simple way such as Hadamard product and inner product and they care less about the importance of features. In this paper, a new model named FiBiNET as an abbreviation for Feature Importance and Bilinear feature Interaction NETwork is proposed to dynamically learn the feature importance and fine-grained feature interactions. On the one hand, the FiBiNET can dynamically learn the importance of features via the Squeeze-Excitation network (SENET) mechanism; on the other hand, it is able to effectively learn the feature interactions via bilinear function. We conduct extensive experiments on two realworld datasets and show that our shallow model outperforms other shallow models such as factorization machine(FM) and field-aware factorization machine(FFM). In order to improve performance further, we combine a classical deep neural network(DNN) component with the shallow model to be a deep model. The deep FiBiNET consistently outperforms the other state-of-the-art deep models such as DeepFM and extreme deep factorization machine(XdeepFM).

[[Attachments]]

- FiBiNET_2019_Huang.pdf {{zotero-linked-file “attachments:FiBiNET_2019_Huang.pdf”}}

创新点 #card

- 使用 SENET 层对 embedding 进行加权

- 使用 Bilinear-Interaction Layer 进行特征交叉

背景

- importance of features and feature interactions

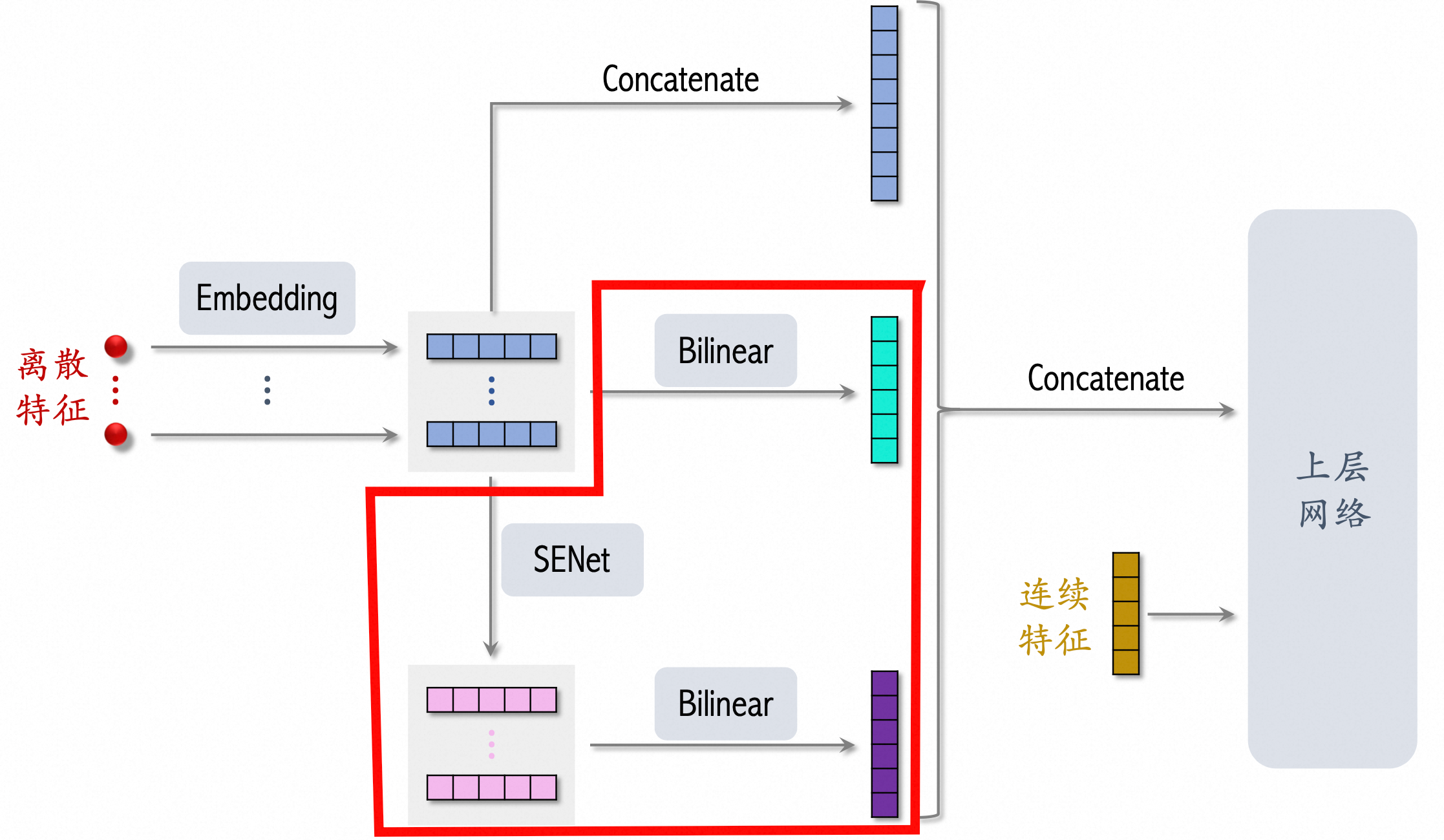

网络结构 #card

[[SENET]] Squeeze-and-Excitation network 特征加权方法

- Squeeze

f*k压缩成f*1 - Excitation 然后通过两层 dnn 变成得到权重

f*1 - Re-Weight 将结果和原始

f*k相乘。 - 原理是想通过控制scale的大小,把重要的特征增强,不重要的特征减弱,从而让提取的特征指向性更强。

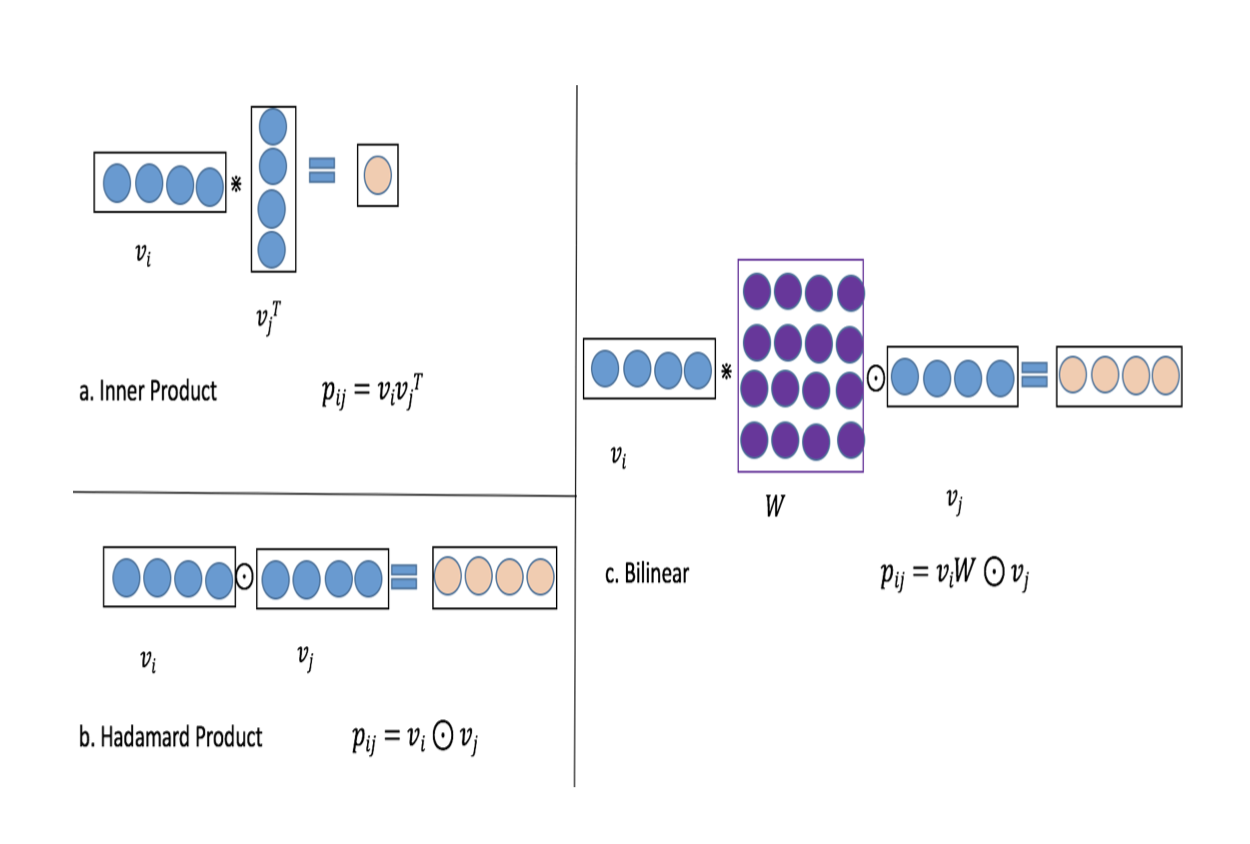

[[Bilinear-Interaction Layer]] → 结合Inner Product和Hadamard Product方式,并引入额外参数矩阵W,学习特征交叉。

- 不同特征之间交叉

v_i * W * v_j时,权重矩阵来源- Field-All Type → 全体共享 W

- 参数量 →

feature * embedding + emb*emb

- 参数量 →

- Field-Each Type → 每个 filed 一组 W

- 参数量 →

feature * embedding + feature*emb*emb

- 参数量 →

- Filed-Interaction Type → 不同特征之间有一组 w

- 参数量 →

feature * embedding + feature*feature*emb*emb

- 参数量 →

- Field-All Type → 全体共享 W

- Bilinear-Interaction Layer 对比 [[FFM]] 有效减少参数量

- FM 参数量 →

feature * embedding - FFM 参数量 →

feature * filed * embedding

- FM 参数量 →

- 不同特征交叉方式

[[ETA]] fm 交叉部分可以尝试引入 bi layer,使用 link 状态组合 W。

- 但是路况状态可能会改变

Ref

@Applying Deep Learning To Airbnb Search

[[Abstract]]

- The application to search ranking is one of the biggest machine learning success stories at Airbnb. Much of the initial gains were driven by a gradient boosted decision tree model. The gains, however, plateaued over time. This paper discusses the work done in applying neural networks in an attempt to break out of that plateau. We present our perspective not with the intention of pushing the frontier of new modeling techniques. Instead, ours is a story of the elements we found useful in applying neural networks to a real life product. Deep learning was steep learning for us. To other teams embarking on similar journeys, we hope an account of our struggles and triumphs will provide some useful pointers. Bon voyage!

[[Attachments]]

- Applying Deep Learning To Airbnb Search_2018_Haldar.pdf {{zotero-linked-file “attachments:Applying Deep Learning To Airbnb Search_2018_Haldar2.pdf”}}

记录 Airbnb 深度模型探索历程。

业务:顾客查询后返回一个有序的列表(Listing,对应房间)。

深度模型之前使用 GBDT 对房子进行打分。

Model Evolution

- 评价指标 [[NDCG]]

- Simple NN

- 32 层 NN + Relu,特征和优化目标和 GBDT 效果相同

- 打通深度模型训练和线上预测的 pipeline。

- LambdaRank NN

- [[LambdaRank]] 直接优化 NDCG

- 采用 pairwise 的训练方式,构造 <被预定的房间,未被预定的房间> 的训练样本

- pairwise loss 乘上 item 对调顺序带来的指标变化 NDCG,关注列表中靠前位置的样本

- 比如 booked listing 从 2 到 1 的价值比 books listing 从 10 到 9 的意义大。

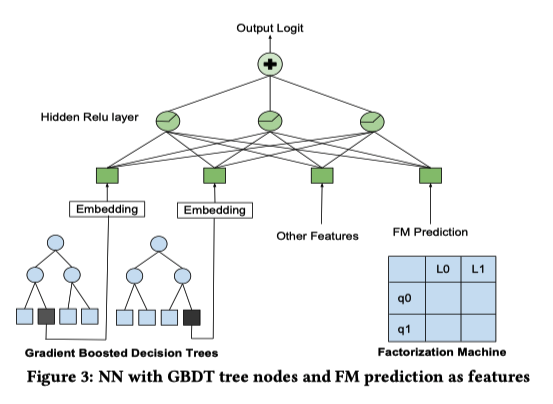

- Decision Tree/Factorization Machine NN

- GBDT 叶子节点位置(类别特征) + FM 预测结果放到 NN 中。

- Deep NN

- 10 倍训练数据,195 个特征(离散特征 embedding 化),两层神经网络:127 fc + relu 以及 83 fc + relu。

- 部分 dnn 的特征是来自其他模型,之间在 [[@Real-time Personalization using Embeddings for Search Ranking at Airbnb]] 里面提到的特征也有使用。

- 在训练样本达到 17 亿后,ndcg 在测试集和训练集的表现一致,这样一来可以使用线下的效果来评估线上的效果?

- 深度模型在图像分类任务上已经超过人类的表现,但是很难判断是否在搜索任务上也超过人类。一个关键是很难定义搜索任务中的人类能力。

Failed Models

- Listing ID

- listing id 进行 embedding,但是出现过拟合。

- embedding 需要每个物品拥有大量数据进行训练,来挖掘他们的价值

- 部分 Listing 有一些独特的属性,需要一定量的数据进行训练。

- listing id 进行 embedding,但是出现过拟合。

- Multi-task learning

- booking 比 view 更加稀疏,long view 和 booking 是有相关的。

- 两个任务 Booking Logit 和 Long View Logit,共享网络结构。两个指标在数量级上有差异,为了更加关注 booking 的指标,long view label 乘上 log(view_duration)。

- 线上实验中,页面浏览的时间提高,但是 booking 指标基本不变。

- 作者分析长时间浏览一个页面可能是高档房源或页面描述比较长。

- [[Feature Engineering]]

- GBDT 常用的特征工程方法:计算比值,滑动窗口平均以及特征组合。

- NN 可以通过隐层自动进行特征交叉,对特征进行一定程度上的处理可以让 NN 更加有效。

- Feature [[Normalization]]

- NN 对数值特征敏感,如果输入的特征过大,反向传播时梯度会很大。

- 正态分布

- $$(feature_val - \mu)/\rho$$

- power law distribution

- $$\log \frac{1+feature_val}{1+median}$$

- Feature distribution

- 特征分布平滑

- 是否存在异常值?

- 更容易泛化,保证高层输出有良好的分布

- [[Hyperparameters]]

- [[Dropout]] 看成是一种数据增强的方法,模拟了数据中会出现随机缺失值的情况。drop 之后可能会导致样本不再成立,分散模型注意力的无效场景。

- 替代方案,根据特征分布人工产生噪音加入训练样本,线下有效果,线上没有效果。

- [[神经网络参数全部初始化为0]] 没有效果,[[Xavier Initialization]] 初始化参数,Embedding 使用 -1 到 1 的随机分布。

- Learning rate 默认参数 [[Adam]] 效果不太好,使用 [[LazyAdam]] 在较大 embedding 场景下训练速度更快

- [[Dropout]] 看成是一种数据增强的方法,模拟了数据中会出现随机缺失值的情况。drop 之后可能会导致样本不再成立,分散模型注意力的无效场景。

- Feature Importance [[可解释性]]

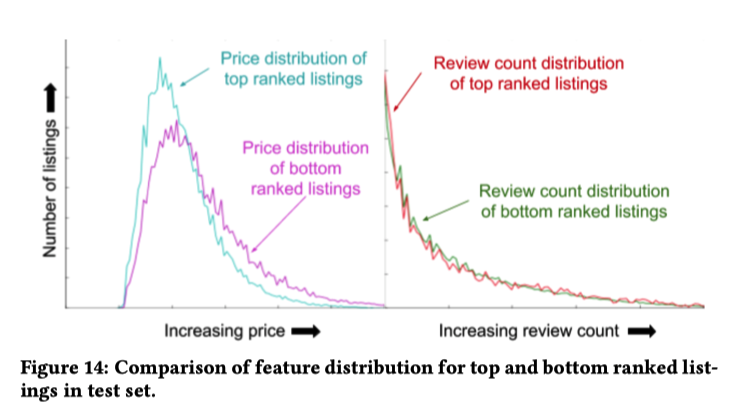

Score Decomposition将 NN 的分数分解到特征上。[[GBDT]] 可以这样做。Ablation Test每次训练一个模型删除一个特征。问题是模型可以从剩余的特征中弥补出缺失的特征。Permutation Test选定一个特征,随机生成值。[[Random Forests]] 中常用的方法。新生成的样本可能和现实世界中的分布不同。一个特征可能和其他特征共同作用产生效果。TopBot Analysis分析排序结果 top 和 bot 的单独特征分布- 左边代表房子价格的分布,top 和 bot 的分布存在明显不同,代表模型对价格敏感。

- 右边代表页面浏览量的分布,top 和 bot 的分布接近,说明模型没有很好利用这个特征。

{:height 415, :width 716}

{:height 415, :width 716}

奇怪的东西

- 论文中一直在引用 [[Andrej Karpathy]] 的建议:don’t be a hero

@Item2Vec: Neural Item Embedding for Collaborative Filtering

[[Abstract]]

- Many Collaborative Filtering (CF) algorithms are item-based in the sense that they analyze item-item relations in order to produce item similarities.

- Recently, several works in the field of Natural Language Processing (NLP) suggested to learn a latent representation of words using neural embedding algorithms. Among them, the Skip-gram with Negative Sampling (SGNS), also known as [[Word2Vec]], was shown to provide state-of-the-art results on various linguistics tasks.

- In this paper, we show that item-based CF can be cast in the same framework of neural word embedding.

- Inspired by SGNS, we describe a method we name item2vec for item-based CF that produces embedding for items in a latent space.

- The method is capable of inferring item-item relations even when user information is not available.

- We present experimental results that demonstrate the effectiveness of the item2vec method and show it is competitive with SVD.

- 如何定义正样本。#card

- Item2Vec认为对于被同一个用户在同一个会话交互过的物料,彼此应该是相似的,它们的向量应该是相近的。

- 但是考虑到,如果让一个序列内部的物料两两组合,生成的正样本太多了。

- 因此Item2Vec照搬Word2Vec,也采用滑窗,即只在某个物料前后出现的其他物料才被认为彼此相似,成为正样本。

- 如何定义负样本。#card

- 照搬Word2Vec,从整个物料库中随机采样一部分物料,与当前物料组合成负样本。

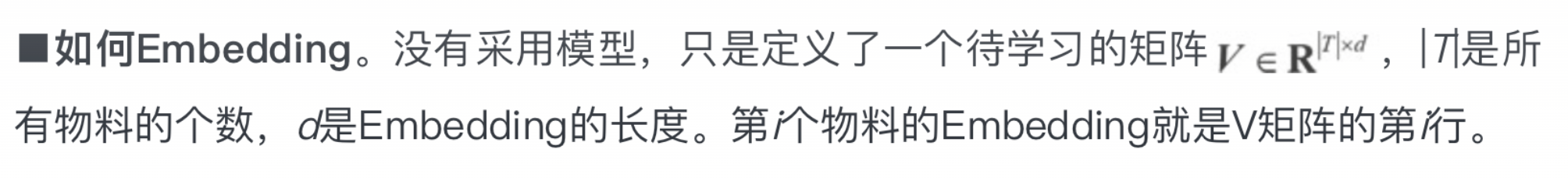

- 如何 embedding #card

- 如何定义损失函数 #card

- word2vec neg loss

- word2vec neg loss