transformer

[[Abstract]]

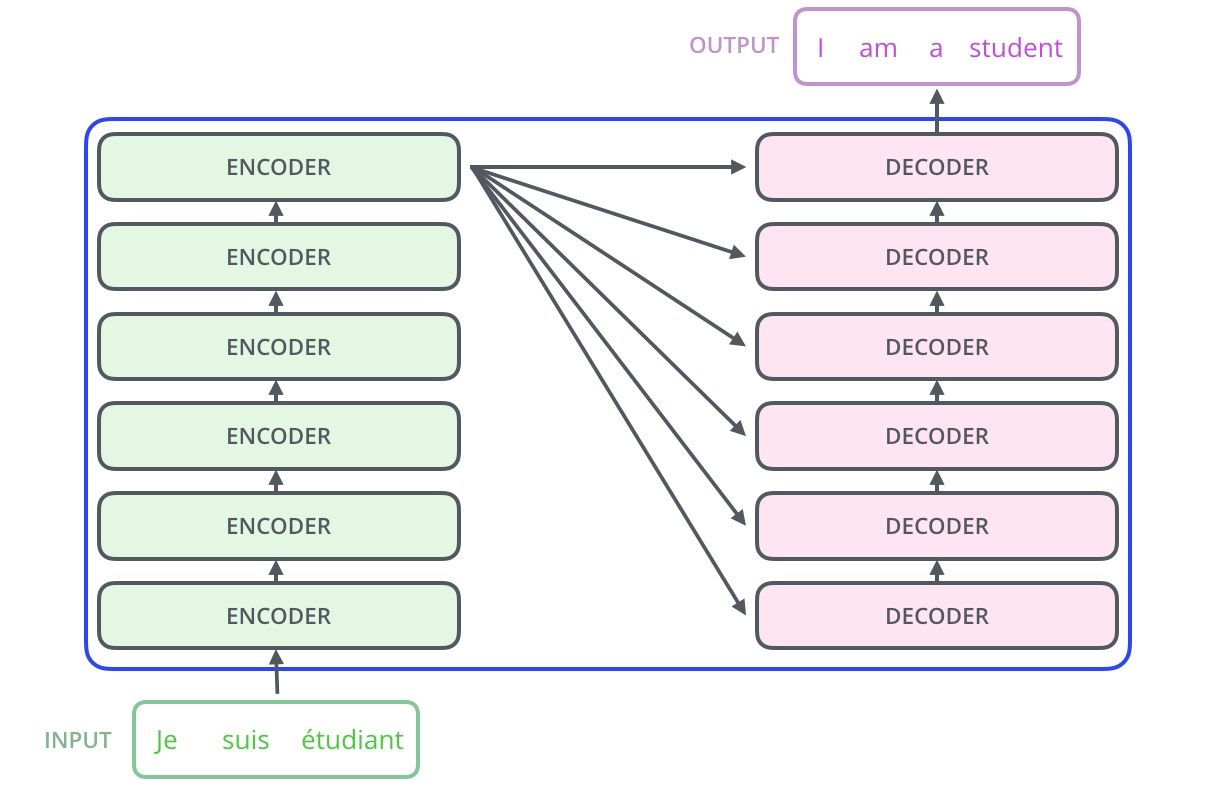

- The dominant sequence transduction models are based on complex recurrent or convolutional neural networks that include an encoder and a decoder. The best performing models also connect the encoder and decoder through an attention mechanism. We propose a new simple network architecture, the Transformer, based solely on attention mechanisms, dispensing with recurrence and convolutions entirely. Experiments on two machine translation tasks show these models to be superior in quality while being more parallelizable and requiring significantly less time to train. Our model achieves 28.4 BLEU on the WMT 2014 Englishto-German translation task, improving over the existing best results, including ensembles, by over 2 BLEU. On the WMT 2014 English-to-French translation task, our model establishes a new single-model state-of-the-art BLEU score of 41.8 after training for 3.5 days on eight GPUs, a small fraction of the training costs of the best models from the literature. We show that the Transformer generalizes well to other tasks by applying it successfully to English constituency parsing both with large and limited training data.

[[Attachments]]

模型结构

Encoder and Decoder Stacks

- [[Decoder]]

- 第二个子层是 multi-head attention,用来捕获 encoder 的 output 和 decoder 的 output 之间的注意力关系。

- k 和 v 是来自 encoder,q 来自上一层 decoder

- 第二个子层是 multi-head attention,用来捕获 encoder 的 output 和 decoder 的 output 之间的注意力关系。

Input Embedding

- Attention 是 → [[Scaled Dot-Product Attention]]

[[Position-wise Feed-Forward Networks]]

Embeddings and Softmax

- 权重共享

- decoder 和 encoder embedding 层

- 原始任务是 English-German 翻译,词表使用 bpe 处理,最小单元是 subword。两种语言有相同地 subword

- 不同语言之间可以共享对数词或符号的建模

- decoder 中 embedding 层 和 fc 层权重

- embedding 层通过词的 one-hot 去取对应的向量

- fc 层通过向量去得到可能是某个此词的 softmax 概率最大,和词相同的那一行对应的点积和softmax概率会是最大的。

- 两数和相同的情况下,两数相等对应的积最大。

- decoder 和 encoder embedding 层

使用 self-attention 相当于处理[[词袋模型]],忽视单词之间位置关系,通过引入 [[Position Representation]] 弥补模型缺陷。

[[train]]